CANCUN--The idea of using misinformation and disinformation to influence people, groups, and nations is nearly as old as recorded history. Egyptian pharaohs used it, as did the Nazis, Soviets, and any intelligence agency you’d care to name. But in recent years disinformation has begun to cross over into the security world as organized attack groups and individual operators have made it part of their arsenals, and in some cases their main goal.

The last few years have produced a long list of examples of hacking groups either using disinformation as part of their campaigns or using hacking campaigns in order to spread disinformation. A prime example is Guccifer 2.0, the attacker who claims to have hacked the Democratic National Committee and later provided sensitive documents to news organizations. That attacker is widely believed to be tied to Russian intelligence, but has claimed to be Romanian.

“We interacted with him on Twitter and said let’s have conversation in Romanian, but he said, oh, I don’t really speak it,” Matt Tait, senior cybersecurity fellow at the Robert S. Strauss Center for International Security and Law at the University of Texas at Austin, said during a talk on the history of disinformation at the Kaspersky Security Analyst Summit here Thursday.

Tait, a former security specialist at GCHQ, the UK’s signals intelligence agency, said history is littered with political parties, rulers, and small groups using disinformation as a way to create problems for their opponents and then use those problems for their own gain. Egyptian pharaohs did it on a grand scale, building massive temples to themselves to convince their predecessors and the gods of their worthiness for eternal life.

“If you’re the pharaoh and you want to get into the afterlife but you suck at fighting and haven’t made any conquests, you need to trick the gods into thinking you’re really good at fighting. So you create Luxor,” Tait said.

“It turns out people suck at making decisions. Tribalism and confirmation bias play a big part in how we make those decisions. When you say a lie enough times, people come to believe it.”

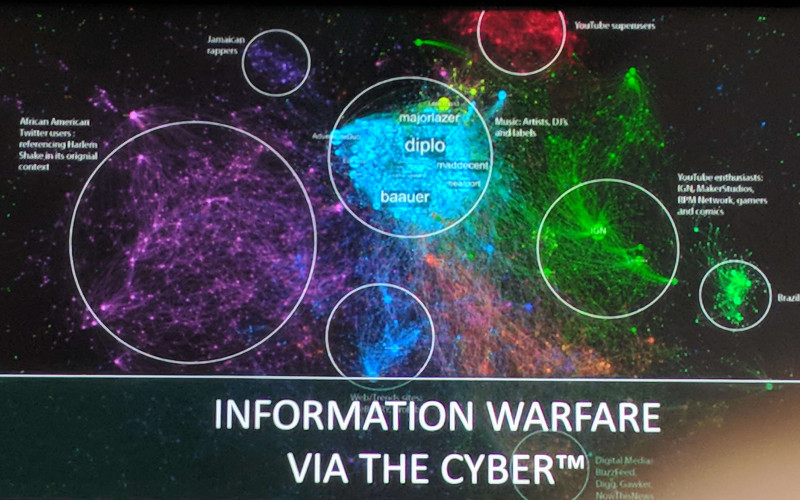

The Cold War itself was essentially a decades-long information operation with powerful adversaries using whatever resources they had at hand to advance their agendas. Those resources included mass media, and the Internet has now become a key part of disinformation operations for governments and individuals alike. What once took decades and thousands of people to do can now be accomplished with a few Twitter bots.

“In some of these operations, the same bots are tweeting on both sides of an issue. Why would they do that?” Tait said. “They’re trying to drive a wedge into a crack that already exists.”

The issue, Tait said, is that people don’t know who to trust, especially online.

“We need better ways of much more quickly identifying forged information. We also need to be quite careful with the way we’re building social networks. We assume the people using these things are ordinary users, but it turns out there’s a lot of bots and they’re being used strategically by many people.”

Disinformation has become a key component of hacking campaigns.